The device is pretty dumb and fully dependent on the speed of the internet to produce a result. In this sense, the device is just a convenient gateway to a cloud model, like a carrier pigeon between yourself and Amazon’s servers. For standard IoT devices, such as Amazon Alexa, these devices transmit data to the cloud for processing and then return a response based on the algorithm’s output. By having a more intelligent system that only activates when necessary, lower storage capacity is necessary, and the amount of data necessary to transmit to the cloud is reduced. For a large portion of the day, the camera footage is of no utility, because nothing is happening. Imagine a security camera recording the entrance to a building for 24 hours a day. For many IoT devices, the data they are obtaining is of no merit.

By keeping data primarily on the device and minimizing communications, this improves security and privacy. Such data could be intercepted by a malicious actor and becomes inherently less secure when warehoused in a singular location (such as the cloud). Transmitting data opens the potential for privacy violations. AI pioneers have discussed this idea of “data-centric” computing (as opposed to the cloud model’s “compute-centric”) for some time and we are now beginning to see it play out. Developing IoT systems that can perform their own data processing is the most energy-efficient method.

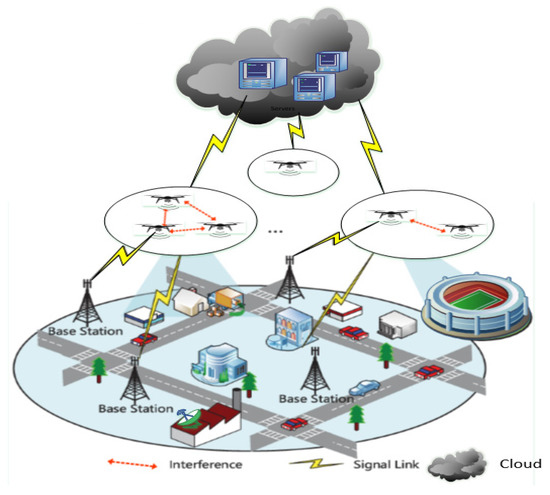

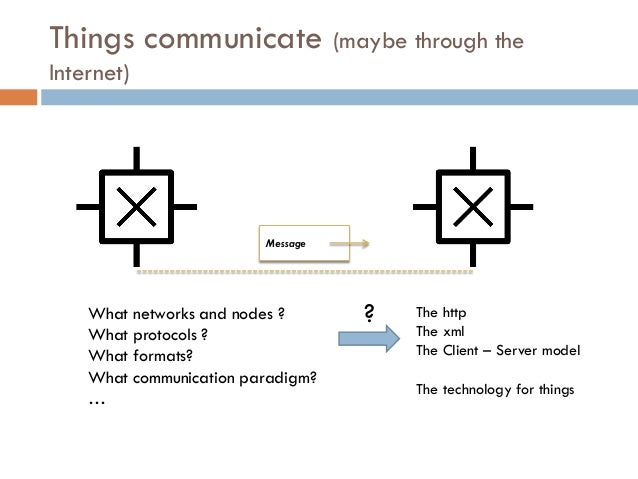

Transmitting data (via wires or wirelessly) is very energy-intensive, around an order of magnitude more energy-intensive than onboard computations (specifically, multiply-accumulate units). Some individuals raised certain concerns with this concept: privacy, latency, storage, and energy efficiency to name a few.Įnergy Efficiency. The traditional idea of IoT was that data would be sent from a local device to the cloud for processing. TinyML emerged from the concept of the internet of things (IoT). The main industry beneficiaries of tinyML are in edge computing and energy-efficient computing. The field is an emerging engineering discipline that has the potential to revolutionize many industries. Tiny machine learning (tinyML) is the intersection of machine learning and embedded internet of things (IoT) devices. Such ideas, like more efficient algorithms, data representations, and computation have been the focus of a seemingly unrelated field for several years: tiny machine learning. However, it has also helped to stimulate interest within the AI community towards more energy-efficient computing. While the achievements of GPT-3 and Turing-NLG are laudable, naturally, this has led to some in the industry to criticize the increasingly large carbon footprint of the AI industry. Some estimates claim that the model cost around $10 million dollars to train and used approximately 3 GWh of electricity (approximately the output of three nuclear power plants for an hour). This is more than 10x the number of neurons than the next-largest neural network ever created, Turing-NLG (released in February 2020, containing ~17.5 billion parameters). This came to a head recently with the release of the GPT-3 algorithm (released in May 2020), boasting a network architecture containing a staggering 175 billion neurons - more than double the number present in the human brain (~85 billion).

These devices have been augmented with the ability to distribute learning across multiple systems in an attempt to grow larger and larger models. More recently, we have seen the development of specialized application-specific integrated circuits (ASICs) and tensor processing units (TPUs), which can pack the power of ~8 GPUs. At this time, algorithms could still be run on single machines. Sh ortly after, computation using graphics processing units (GPUs) became necessary to handle larger datasets and became more readily available due to introduction of cloud-based services such as SaaS platforms (e.g., Google Colaboratory) and IaaS (e.g., Amazon EC2 Instances). Initially, models were small enough to run on local machines using one or more cores within the central processing unit (CPU). Over the past decade, we have witnessed the size of machine learning algorithms grow exponentially due to improvements in processor speeds and the advent of big data.

0 kommentar(er)

0 kommentar(er)